Give Your Agent

Eyes and Ears

Low-power perception, emotional interaction, and Agent connectivity — the "Stripe" for AI native devices.

The Problem

AI Agents Are Brilliant.

But They Can't See or Hear.

Vision & audio are prerequisites — not nice-to-haves — for Agents in the real world.

Agents can call APIs and run code — but they still can't see, hear, or speak. eyespot fills that gap.

The most critical AI infrastructure is open source. eyespot follows the same path.

Our Answer

Eyespot: an open-source hardware & software development platform

that lets Agents quickly access multimodal-interactive smart devices.

Real-World Scenarios

SPOT → Understand → Act

Someone at the door? Pet on the sofa? Package arrived? eyespot lets your Agent perceive all of this in real time — and act on it.

"Anyone at the door?"

👁 Perceives: Doorbell camera detects a delivery person placing a package.

Agent → Sends notification + Unlocks smart lock

"How's grandma doing today?"

👁 Perceives: Camera detects prolonged inactivity — no movement for 2 hours.

Agent → Calls family + Returns AI-generated daily timeline

"Always watching. No prompt needed."

👁 Perceives: Camera sees you grab your keys at 8:02 AM.

Agent → Triggers 'leaving home' mode — lights off, windows closed

"Watch over the kids."

👁 Perceives: OvO camera reads facial expression — child is crying.

Agent → Switches to comfort mode + Notifies parent

"What does she want to do now?"

👁 Perceives: Robot detects high energy + laughter in the last 5 min.

Agent → Suggests a game, not a story — no manual switch needed

"Keep the pet company."

👁 Perceives: Microphone detects dog whining — alone for 3 hours.

Agent → Initiates play interaction + Logs behavior to owner

"What does this menu say?"

👁 Perceives: AI glasses parse foreign-language menu in first-person view.

Agent → Reads translated items aloud in real time

"Summarize what they said."

👁 Perceives: Pendant microphone transcribes 10-min conversation continuously.

Agent → Returns 3-bullet summary on demand

"Coach my pronunciation."

👁 Perceives: Device hears mispronounced word + reads visual scene context.

Agent → Corrects in real time + Generates situational drill

MULTIMODAL INTERACTION INFRASTRUCTURE

Hardware as Native Skills, for 🦞OpenClaw

THREE-LAYER ARCHITECTURE

The Eye

Perception Layer

Camera/ToF + mic array + speaker + night vision + edge AI chip

The Brain

Understanding Layer

Local multimodal VLM (0.5T–6T) + scene-specific lightweight models

The Bridge

Connection Layer

Agent framework adapter + RESTful API + MCP protocol + WebRTC + MQTT

Hardware as Native Skills

eyespot packages cameras, microphones, and speakers as first-class Agent Skills. Three layers: The Eye (camera/ToF + mic + speaker), The Brain (local VLM, 0.5T–6T compute), The Bridge (Agent framework adapter). Your Agent calls them the same way it calls any tool — no custom drivers, no glue code.

Plug In Any Hardware

ONVIF cameras, RTSP streams, WebRTC endpoints, USB microphones, Bluetooth speakers — eyespot abstracts them all behind a unified API your Agent already understands.

Flexible Inference: Local or Cloud

Local inference on capable hardware (0.5T–6T) — data stays on device, zero cloud dependency. Cloud inference for cost-sensitive deployments. Choose based on your hardware spec and privacy needs.

BUILT-IN SKILLS

Full-stack perception, understanding, and action — ready to use out of the box.

Scene Observation

Ask in natural language, get real-time scene descriptions.

Object Recognition

Identify people, pets, packages — structured JSON output.

Event Detection

Always-on monitoring, proactive event push to Agent.

Visual Tasks

Set complex tasks with visual conditions via natural language.

Timeline Recall

AI-understood event timeline, not traditional video playback.

Open API

RESTful API, Webhook, deep Home Assistant integration.

Application Scenarios

Three Hardware Categories

Through the Eyespot framework, three major hardware categories achieve a complete physical-world perception loop: SPOT → UNDERSTAND → ACT.

Smart Home Terminal

SMART HOME TERMINAL

AI Security Camera

On-device NPU for real-time fall detection & intrusion recognition, local inference with zero cloud upload

AI Smart Doorbell

Face recognition + voice intercom, automatic stranger alerts, delivery auto-logging

Elderly/Child Care Camera

Proactive anomaly sensing, inactivity alerts, remote family real-time linkage

AI Companion Robot

AI COMPANION ROBOT

OvO Desktop Robot

In-house reference hardware with camera + screen + voice all-in-one, a family AI assistant that gets things done

AI Toy / Children's Companion

Visual emotion sensing, adaptive dialogue content, engaging smart interactive experience

Pet Companion / Monitor Robot

Pet behavior recognition + remote interactive feeding, real-time companionship when you're away

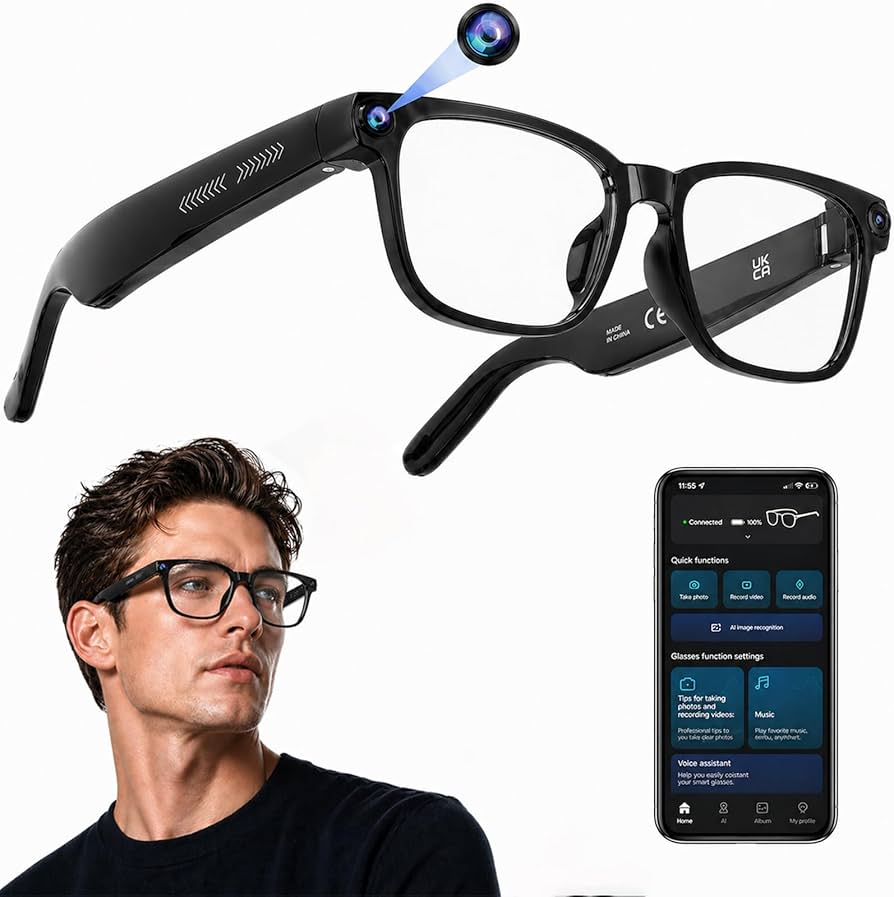

Smart Wearables

SMART WEARABLES

AI Smart Glasses

First-person visual understanding, real-time scene parsing, seamless natural voice interaction

AI Pendant / Wearable Agent

Ultra-compact edge AI terminal, a portable Agent perception node that captures life's key moments

AI Language Practice Device

Real-time speech recognition + visual context sensing, immersive language learning & oral practice

Covering 0.5T–6T full compute range, with OvO reference hardware for rapid development and mass production.

HARDWARE INQUIRY

AI Developer or Hardware Manufacturer? Let's Talk.

Whether you're interested in OvO hardware procurement, ODM customization, or enterprise-scale deployment — we'd love to help you find the right solution.

ECOSYSTEM

Works With Any Agent Framework

eyespot is framework-agnostic. If your Agent can call tools, it can use eyespot Skills.

TECHNOLOGY COMPARISON

Eyespot vs XiaoZhi AI vs LiveKit

Three projects with fundamentally different positioning. XiaoZhi AI is a voice companion, LiveKit is A/V transport infrastructure, and eyespot is the only open-source framework that packages hardware perception as Agent Skills.

| Dimension | XiaoZhi AI | LiveKit | eyespot |

|---|---|---|---|

| Positioning | Voice Companion | Real-time A/V Cloud Infra | Hardware Perception Skill Framework |

| Visual Perception | |||

| Audio Perception | |||

| Structured JSON Output | |||

| Proactive Event Push | |||

| Hardware Product | ESP32 ($7 DIY) | None | OvO Series |

| Agent Framework Integration | Weak | Voice Agent Pipeline | Native Skill Calls |

| Open Source License | MIT | Apache 2.0 | MIT |

| Business Model | Ecosystem + HW Licensing | Open Core + Usage Cloud | Open SDK + HW + Cloud |

| Valuation / Funding | Shifang Ronghai Ecosystem | $1B (Series C) | Pre-Seed |

Key Differentiator

XiaoZhi AI gives Agents a voice, LiveKit gives Agents A/V transport, eyespot gives Agents the ability to see, hear, and understand the physical world — complementary, not competitive. eyespot fills the perception layer gap.

BUSINESS MODEL

Open Source Free. Commercially Sustainable.

Core SDK is free and open source forever. Monetization through hardware sales, pay-per-analysis Agent Deployment, and enterprise plans. Contact us for specific pricing.

Open Source SDK

Free ForeverMIT licensed. The core framework is fully open source. Any developer can use, modify, and distribute freely.

- eyespot Core SDK

- MCP Server

- Community Support

- GitHub Docs

OvO Hardware

One-Time PurchaseOfficial reference hardware built on eyespot — a plug-and-play flagship Agent Skill.

- Lite / Pro / Ultra tiers

- Pre-installed eyespot

- Ready to ship

- Hardware warranty

Agent Deployment / Managed Model

Pay Per AnalysisManaged inference, device management, and event stream processing. No infrastructure to build — pay only for what you use.

- Managed inference

- Fleet management

- Event stream processing

- SLA guarantee

Enterprise

Custom PlanPrivate deployment, custom integration, and dedicated support for large-scale deployments and compliance needs.

- Private deployment

- Custom integration

- Dedicated support

- Compliance certification

GET PRICING

Want specific pricing? Let's talk.

Whether it's Agent Deployment pay-per-analysis plans, hardware bulk procurement, or enterprise private deployment — we'll tailor a solution for you.